Advanced Driver Assistance Systems, or ADAS, is the fastest growing segment in the automotive electronics [1][2]. The purpose of Advanced Driver Assistant Systems is to automate and improve safe driving. We use several ADAS features already built into our cars, such as Adaptive Light Control, Adaptive Cruise Control, Lane departure warnings, Traffic sign recognition and many more. Almost all car manufacturers and all leading suppliers such as Bosch, Autoliv, Continental, Mobileye and many others are working on ADAS systems and the final goal is to build a car that can drive completely autonomous – without any driver involvement. The Society of Automotive Engineers have defined 6 levels to describe the degree of automation[3].

Table1: Six Levels of automation in ADAS

The higher the desired automation level, the larger the validation efforts that are required to develop these assistance systems. The majority of the ADA Systems built into mass production cars today are between 2 and 4. For these systems, millions of kilometers need to be captured and simulated before the final control units are production ready.

Sensors

The majority of the data volume today is produced by video sensors. However, there are many other sensors generating data:• Radar

• Lidar

• GPS

• Ultrasonic

• Vehicle Data

For the ADAS development, data needs to be captured from millions and millions of kilometers and stored somewhere centrally for data enrichment (for example, video frames need to be tagged to provide environmental context, weather conditions, object identification and much more). Car manufacturers and suppliers have fleets of cars, equipped with all kinds of sensors, which drive around the world to capture data.

This is the REAL Big Data

Data granularity, resolution of video, radar and lidar is constantly evolving. Imagine a state of the art front looking radar (FLR), operating at 2800 MBit/s. A typical sub-project for ADAS development and simulation may require 200.000km of captured data. Let’s assume the data is recorded at an average speed of 60 km/h.That means: for 200.000km at 60 km/h we capture 3333,3 hours of data.

Given the data rate of 2800 Mbit/s = 350 MBytes/s = 21 GBytes/min = 1260 GBytes/h

Considering the 3333,3 hours to capture, we end up with 1260 GB/h * 3333,3h = 4,2 PB

And this is only for a single radar sensor. Now consider that usually a million kilometer or more are required and that a high end car contains easily more than 10 different sensors. That is HUGE !

I recently learned from a leading ADAS development company that a typical data set from a single car is about 30TB/day. A fleet of 10 cars produce 300 TB a day. All this data needs to be stored, labeled, cleaned, managed, backed up etc.

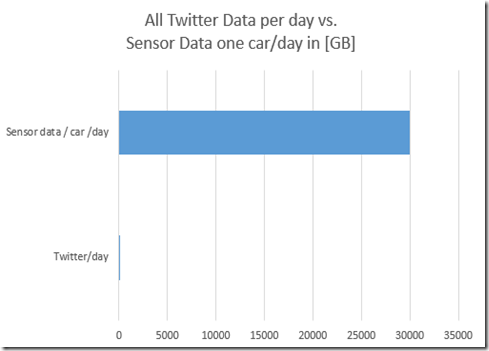

To illustrate the order of magnitude of the data being processed and stored: On Twitter, about 270 million users produce about 100 GB per day. A single test vehicle produces about 30 TB per day, that is 3000 times the value of all Twitter data per day. And this is just the current state of technology.

Figure 1: Daily generated data by all Twitter users vs. sensor data from one car.

For a fully autonomous car with SAE level 5, an estimated data volume with 200 Million kilometers of sensor data is required to be able to simulate sufficient conditions for software development and validation. Considering the technological development with higher resolutions and data rates, these data volumes are just unbelievable.

Regulatory, data retention and other requirements

In addition to the pure data size for an actual development, there are several regulatory and or contractual requirements. For example, data must be kept for 15 years or even longer -depending on country regulations or contractual obligations . And for every past project the vendor needs to be able to run simulations within a reasonable short timeframe. This means, you need to keep the data online. At this size, it’s not possible to archive data on tape and restore it in a reasonable time.Other challenges are:

- As of today, very little data can be re-used across projects. If a new car model has new sensors, other sensor positions or new features, the whole set of data needs to be captured and simulated again for the development process and validations.

- Data must be protected against data loss due to hardware or site failure

- Data must be protected against human failure or malicious application behavior

- Data government policies need to be enforced

- Performance requirements must be met (see below)

The Workflow

Hardware in the Loop Simulation

A simplified workflow is illustrated in figure 2. Data from various sensors are typically stored on SSDs, built into the cars. At the end of a drive to capture data, the SSDs are typically shipped to a location where they can be read and loaded to a central storage system. Imagine you have a fleet of ten cars, about 200-300 TB will likely be ingested. To ingest 300 TB within 8 hours, the storage system needs to be able to write data at 10 GB/s.One of the next steps is then the data enrichment. Video/Radar/Lidar sequences need to be labeled and indexed somewhere so that developers can query for specific video sequences (i.e. show me all video sequences where visibility has been poor while to car cruised at 30 km/s towards a traffic light). Test cases are being defined and configured by the development team and potentially queried into a job queueing system.

For simulation, the data streams are the read by servers and streams further into the devices under simulation. In case of a video, this is typically a camera that has an Electronic Control Unit (ECU) embedded. Instead of recording through the camera optic, the data stream is fed into the camera and the ECU is analyzing the stream and sends relevant driving commands back to the servers. The test software can then validate whether the ECU reacted properly depending on the scenario (i.e. initiate an automatic braking operation if a human is recognized crossing the street in front of the car).

Because the real physical device (camera and ECU) is part of the simulation workflow, this setup is called Hardware In the Loop (HIL). The HIL testing is typically run in real time, i.e. the video sequences cannot be played out in fast forward mode. This has quality assurance reasons as well as regulatory requirements. Because of this, the only way to accelerate the test and simulation time is having dozens and sometimes hundreds of HIL servers and devices running in parallel.

Figure 2: Hardware in the Loop data simulation – simplified workflow

Software in the Loop Simulation

Compared to the HIL-Simulation, a software based simulation can be run much faster. Here, the ECU-software is run in a simulator and much more compute power is available on commodity x64 server hardware. Also, the simulation may run at accelerated speed. Since the device under testing/simulation is run in software only, this method is also called Software in the Loop (SIL – see figure 3). Software in the Loop simulation can be run in early stages of the development cycle and the demand for it is increasing. However, quality and regulatory requirements limit the amount of simulation cycles for SIL-simulation. This might change going forward. Yet, the majority of all tests run in HIL environments.

Figure 3: Software in the Loop data simulation – simplified workflow

Hadoop data access, RESTful API and Machine Learning

OneFS provides access to the data not only through typical NAS protocols like NFS3, NFS4, SMB2,SMB3, ftp and http but also via a native HDFS interface [8] and a RESTful API [10]. That means, Hadoop compute nodes can seamlessly access data that resides on Isilon OneFS. Companies start adapting Machine Learning to Sensor data for better decisions. Also, data is joined with data from external sources such as Openstreetmap geographical data or weather data. Doing so opens new functionalities, improves secure driving and even open up new business models. The following picture illustrates such a workflow where data can be ingested through SMB, modified and enriched by NFS, read through HDFS and data joins done by using the REST API.

Figure 4: Multi-protocol access to OneFS via NFS, SMB, HDFS and REST API to the very same data.

ADAS requirements for the Storage System

The majority of Automotive OEMs and suppliers, working on ADAS use Dell EMC Isilon Scale-Out NAS System to satisfy not only the mentioned capacity requirements. Let’s have a look for the most important ones:

Capacity and Simplicity

As explained above, an ADAS environment definitely needs a storage environment that scales up to multiple petabytes in a single filesystem. This means, it needs to have a node based architecture that allows adding capacity when new requirements arise with no downtime or impact on running simulations. At the same time, the architecture must avoid having traditional RAID architectures that force the administrator of dealing with hundreds or even thousands of RAID-arrays, aggregates, volumes and filesystem. Isilon’s filesystem OneFS delivers exactly that: it is a node based architecture that allows to scale up to 144 nodes (400 nodes planned going forward). Due to its cluster wide erasure coding, no RAID arrays, aggregates or volumes are required. The admin only deals with one single filesystem.The scale-out architecture of Isilon and it’s simplicity makes it unique in the marked and the favorite system within the ADAS industry. For a technical overview of Isilon and OneFS see [4].

Data Tiering for most efficient data retention

As stated earlier, there are often regulatory and/or contractual requirements to keep the raw data online and available for simulation, sometimes for up to 15 years. To be able to simulate certain scenarios or to simulate the whole mileage for an update software release the data cannot be retrieved from tape due to time restrictions. That means that a different method must be used to be able to store data on a most efficient medium. Isilon supports data tiering within the filesystem to different medias and even to the cloud. This works policy based and is easy to maintain. Simple policies for example would keep new data on the fastest node in the cluster, while data that has not been used would be moved to a lower, cheaper tier.

Figure 5: Transparent Data Tiering and Cloudpools in Isilon OneFS

For ADAS, this approach has the advantage, that data on archive type nodes like the NL410 or even HD400 can be directly accessed by the HIL or SIL applications for simulations. No need to tier back for occasional use. The performance and number of reads/writes to these nodes are somewhat more limited, but for a single re-simulation it’s most probably faster than back-tiering to a higher level and running the simulation from there.

In addition, older data can be transparently tiered to an object storage system. This can reside in the datacenter, a remote datacenter or even the cloud. For a detailed description of Isilon’s tiering functionality and Cloudpools see [11] .

Performance

Consider an environment illustrated in figure 2. Potentially hundreds of HIL servers read streams from an Isilon system in parallel, each of the streams requiring a throughput between 150-400MB/s. Considering 80 HIL servers reading at 250 MB/s, the cluster needs to be able to handle 20 GB/s read throughput. Due to the fact that Isilon distributes data and load equally across all nodes in the system, this is absolutely possible. At the same time we may see several GB/s ingest from newly loaded data.With one of the recently announced nodes [12], a single 4U Isilon Scale-Out NAS All-Flash system (which includes a 4-node Isilon cluster) can deliver up to 15GB/s of aggregate bandwidth”. Given the above example with 80 HIL servers it would only require 2x 4U chassis (=8 Isilon nodes) to satisfy the bandwidth requirements. These two chassis have a raw capacity about 1.84 PB. Considering that an Isilon cluster for ADAS typically contains dozens of nodes, performance is not an issue, even with other node types.

Availability

ADAS features are getting more and more important as a value proposition in the market and new features will even enable new business models. That means, time to market is a very important aspect. Considering relatively long simulation times, the availability and reliability requirements against the storage system are enormous. Not only is data-loss a very expensive event, but additionally a system unavailability will potentially stop hundreds of simulations and will result in hundreds of wasted engineering hours.Isilon protects data via various different technologies, such as:

- Distributed erasure coding across nodes with flexible protection level. This protection level can for example range from n+1 to n+4 and it can be set by policy for specific directories, pools, file type, ownership, sizes and other attributes.

- High speed parallel replication to one or more remote locations

- Up to 20.000 snapshots on the local as well as remote filesystems

- The ability to lock directory content for an arbitrary retention time (i.e. if regulatory or contractual requirements require making specific data un-erasable or un-changeable.

Multi-Protocol Access

As mentioned above, modern ADAS development and simulation environments require the capability to store, retrieve and modify data through many different protocols at the same time. Isilon offers that capability with a wide range of supported protocols [9] were all protocols leverage the same system services like authentication, authorization and roles based access control within OneFS.Summary

ADAS is the fastest growing segment in automotive electronics [1] and ADAS features in cars become a key business differentiator for all car manufacturers and suppliers. Due to the technical evolution of sensor technology, development and simulation of ADAS systems have massive capacity and performance requirements for shared storage which can only be satisfied by a distributes filesystem architecture. The majority of ADAS development companies use Isilon for that reason. It is simple to use and doesn’t require excessive labor hours to run and maintain a system. Isilon is very mature and has been on the market since the year 2000 or so. Gartner has recently evaluated Scale-Out Filesystems and Object Storage Systems and has rated Dell EMC as the absolute leader in this space [6].

Figure 6: Gartner Magic Quadrant for Scale-Out File- and Object-Systems [6]

Due to the huge data volume, the performance requirements and the HIL-Simulation, cloud offerings are currently not a valid option for this workload. This might change when the industry can move from HIL to almost 100% SIL-simulation, but it is very questionable whether Cloud can deliver a better price per TB at this scale.

References

[1] https://en.wikipedia.org/wiki/Advanced_driver_assistance_systems[2] Ian Riches (2014-10-24). "Strategy Analytics: Automotive Ethernet: Market Growth Outlook | Keynote Speech 2014 IEEE SA: Ethernet & IP @ Automotive Technology Day" . IEEE. Retrieved 2014-11-23.

[3] Automated Driving; Levels of driving automation, SAE International, https://www.sae.org/misc/pdfs/automated_driving.pdf

[4] EMC Isilon OneFS: A Technical Overview, White Paper

https://support.emc.com/docu44126_White_Paper:_EMC_Isilon_OneFS:_A_Technical_Overview.pdf?language=en_US

[5] High Availability and Data Protection with EMC Isilon Scale-Out NAS, White Paper

https://support.emc.com/docu42429_White_Paper:_High_Availability_and_Data_Protection_with_EMC_isilon_Scale-Out_NAS.pdf?language=en_US

[6] Magic Quadrant for Distributed File Systems and Object Storage, Published: 20 October 2016 ID: G00307798, Julia Palmer, Arun Chandrasekaran, Raj

Bala

https://www.gartner.com/doc/reprints?id=1-3HYASF6&ct=160919&st=sb

[7] Dell EMC Press release: #1 Scale-Out NAS System Dell EMC Isilon Goes All-Flash for Unstructured Data,

https://www.emc.com/about/news/press/2016/20161019-05.htm

[8] How to access data with different Hadoop versions and distributions simultaneously, blog post, Stefan Radtke

http://stefanradtke.blogspot.ie/2015/05/how-to-access-data-with-different-hdfs.html

[9] OneFS Multiprotocol Security Untangled, White Paper

https://support.emc.com/docu53353_White_Paper:_OneFS_Multiprotocol_Security_Untangled.pdf?language=en_US

[10] OneFS 8.0.1 API Reference

https://support.emc.com/docu79794_OneFS_8.0.1_API_Reference.pdf?language=en_US

[11] Transparent Storage Tiering to the Cloud using Isilon Cloudpools, blog post, Stefan Radtke

http://stefanradtke.blogspot.de/2016/02/transparent-storage-tiering-to-cloud.html

[12] Press Release: #1 Scale-Out NAS System Dell EMC Isilon Goes All-Flash for Unstructured Data

http://www.emc.com/about/news/press/2016/20161019-05.htm